CVPR and EAI took a lot out of me, and some unexpected stuff came up. Regular blogging will resume next week, once I return to sanity land.

-the Centaur

Words, Art & Science by Anthony Francis

CVPR and EAI took a lot out of me, and some unexpected stuff came up. Regular blogging will resume next week, once I return to sanity land.

-the Centaur

Got home from Con Carolinas, and in addition to the roof issues and the fridge issues now the door to the garage is kaput. More tomorrow.

Drew today, though.

-the Centaur

not sure what's going on here ... stay tuned.

-the Centaur

Yeah, he's up there. Not sure how, but he is. Hope he's not stuck. Ah, just went out to check, and he's gone, so I assume he moved on. Come to think of it, I wonder if he's the same as this guy:

This little guy got in and disappeared into the fireplace - I assumed he fell from the chimney, but he's thin enough maybe to have wormed in a windowframe perhaps? Not sure, the other guy looks thicker about the middle, but it may be the case that he ate something.

Hypothesis is, the little guys are immature versions of this handsome fellow, a rat snake perhaps, who is also a climbing mofo ...

Snek!

-the Centaur

No, this isn't an April Fool's joke: I'm the Author Guest of Honor at Clockwork Alchemy 2024! In recognition of my steampunk novel and many steampunk stories, my long association with Clockwork Alchemy, and the fact that they were not able to chase me away (even with a broom), the Clockwork folks have honored me with even more programming than normal! More seriously, though, there will be an author tea, presentations on neurodiversity, an audio reading of Jeremiah Willstone and the Choir of Demons, and even the obligatory airships panel (though this year it will be a more general panel on steampunk vehicles).

More news as it develops!

-the Centaur

Thanks, mold, for making me suspicious of every new pummelo, no matter how fresh and delicious. When I have actually gotten sick off a food, sometimes I develop a lifelong aversion to it - like chili burgers, lemon bars, and pump-flavored sodas, the three things I remember eating before my worst episode of food poisoning. However, apparently finding something rotten just as you eat it is a close second.

Sigh. Here's hoping this fades.

-the Centaur

Pictured: a tasty and delicious pummelo, but even so, I can't look at them the same. Is there an evil demon face embedded in that, thanks to pareidolia?

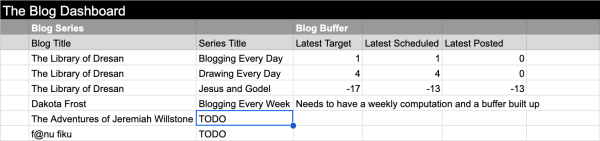

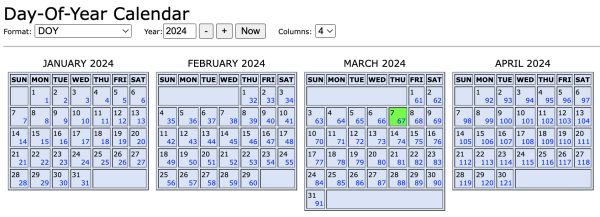

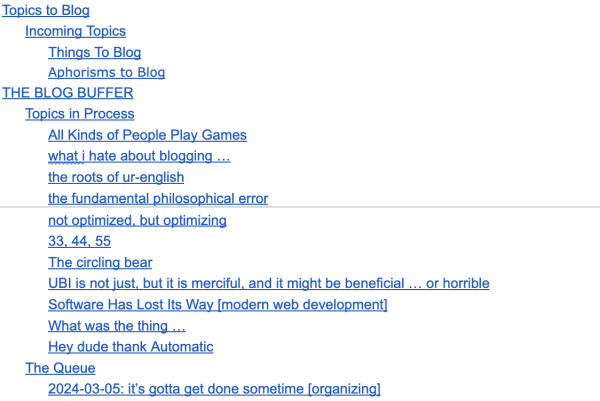

The title was supposed to say "that blog buffer" but I'm going with the misspelling. The critical thing to do with the buffers it to get far enough ahead that you can not just coast for a day or two, but take out the time to do more ambitious projects, like "fix it the way that it works" or "33 44 55", two posts that I've been putting off because I haven't been giving myself enough time to blog.

Well, according to the NOAA's day-of-year calendar, post sixty-eight needs to go up Fridya, so once I schedule this post, "the buffer" will give me until Saturday to come up with a really good idea.

I'm working on it, I'm working on it!

Blogging every day.

-the Centaur

Pictured: A few screencaps: the "The Blog Dashboard" Google Sheet, the NOAA Day of Year Calendar, and "Blog This or Code It!", the Google Doc where I dump ideas that I hope to turn into blogposts.

He sure looks upset. Are you upset? I think you’re upset.

You’re still hanging around for scritchies though.

Maybe not so upset.

Who can tell.

-the Centaur

Pictured: a cat, looking very upset. Well. Maybe not so upset.

What the heck is a nerd, anyway?

I've learned a lot about neurodiversity in the past months - first, after having the crazy idea of launching yet another anthology, this one about neurodivergent people encountering aliens, and second, after coming to grips with my own neurodivergence (social anxiety disorder with perhaps a touch of undiagnosed autism). We want The Neurodiversiverse Anthology to land well with its intended audience, and need to get it right!

But it struck me that there's a lot of unhelpful cross-stereotyping between autistic folks and nerd and geek culture. Sure, there are autistic people who become intensely interested in "special topics", but sometimes that special topic is a sport or other "socially acceptable" activity, making it easier for autistic people to mask. And as Devon Price points out in her book Unmasking Autism, autistic people have specific bottom-up processing styles which are different from the top-down, "allistic" style of so-called "neurotypical" people. So just being obsessed with a special topic doesn't make you autistic, nor vice versa.

In fact, speaking as a proud member of "nerd" and "geek" culture, my social group had our own definitions of what "nerd" and "geek" meant, which indicated a difference in thinking styles, but didn't necessarily map to an actual neurodivergence. Geekdom in particular meant a certain kind of out-of-the-box thinking that doesn't align with what I read about the processing styles of autistic folks - not to say that these styles couldn't overlap, or even that they might frequently co-occur, but that "geek" had its own meaning.

That made me think back on conversations with a friend who was once called a "geek" by someone who meant it as an insult. HIs response? "Yes, I am - and you're not. Ha, ha, ha!" To him, it was a badge of honor, as it signified a deeper understanding of certain systems of the world and a different way of thinking - not neurodivergent, per se, but just different. We had a long conversation about different words and their nuances, and it led me to think about how these words have lurking meanings in my head.

So here's my attempt to unpack that terminology a little bit:

So one point I'm trying to make here is that nerding out about something can take you places. Sometimes it takes you to a deep understanding of a subject matter, which sometimes makes people uncomfortable; sometimes that turns out to be very lucrative, and sometimes that turns out to be ostracizing. But, even then, sometimes the people we think are the nuttiest turn out to be the most brilliant people.

But another point I'm trying to make is that nothing about geeking out really has anything to do with neurodivergence - it's a pattern of behavior which occurs in neurodivergent and neurotypical people alike. Perhaps an autistic person might geek out about something, or perhaps they might not. Perhaps a geek might have autistic tendencies, or perhaps they might not. Perhaps some of these traits are often found together, or perhaps, even if that co-occurrence is actually real, it can distract us from looking sincerely at the unique and whole human beings we are interacting with, and collapsing these different ways of looking at people into a single all-encompassing category is unnecessary stereotyping.

Or, put another way, if you know one autistic person, you know one autistic person, and if you know one geek, you know one geek, and there's no guarantee that knowing one tells you much about the other.

-the Centaur

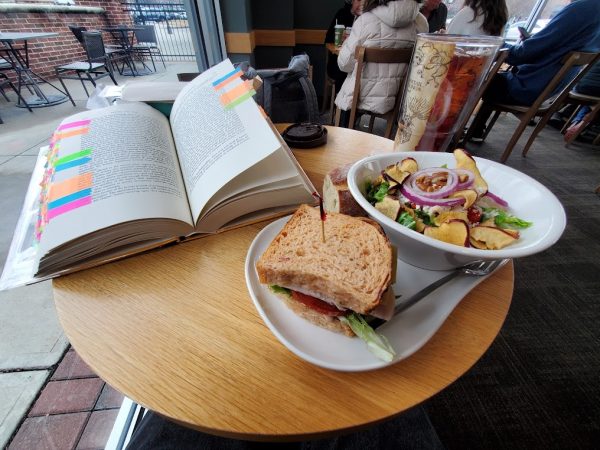

Recently I went to do something in Mathematica - a program I've used hundreds if not thousands of times - and found myself stumped on a simple issue related to defining functions. I've written large, complicated Mathematica notebooks, yet this thing I done hundreds of times was stymieing me.

But - yes - I'd done it hundreds of times; but not regularly in the past year or so.

My knowledge had gone stale.

Programming, it appears, is not like riding a bike.

What about other languages? I can remember LISP defun's, mostly, but would I get a C++ class definition right? I used to do that professionally, eight years ago, and have published articles on programming C++ ... but I've been writing almost exclusively Python and related scripting languages for the past 7 years.

Surprisingly, my wife and I had this happen in real life. We went to cook dinner, and surprisingly found some of the stuff in the pantry had gone stale. During the pandemic, you see, we bought ahead, since you couldn't always find things, but we consumed enough of our staples that they didn't go stale.

Not so once the rate of consumption dropped just slightly - eating out 2-3 times a week, eating out for lunch 2-3 times a week - with a slight drop in variety. Which meant the very most common staples were consumed, but some of the harder-to-find, less-frequently-used stuff went bad.

We suspect some of it may have had near-expired dates we hadn't paid attention to, but now that we're looking, we're carefully looking everywhere to make sure our staples are fresh.

Maybe, if there are skills we want to rely on, we should work to keep those skills fresh too.

Maybe we need to do more than just "sharpen the saw" (the old adage that work goes faster if you take the time to maintain your tools). Perhaps the saw needs to be pulled out once a while and honed even if you aren't sawing things regularly, or you might find that it's gone rusty while it's been stored away.

-the Centaur

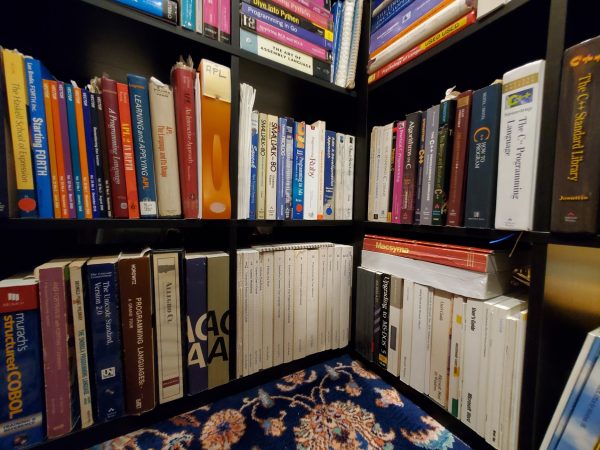

Pictured: The bottom layers of detritus of the Languages Nook of the Library of Dresan, with an ancient cast-off office chair brought home from the family business by my father, over 30 years ago.

Somehow, inadvertently, I caused the previous picture's post to get blurred in transport. Below is a better version, which seems to have come through much clearer:

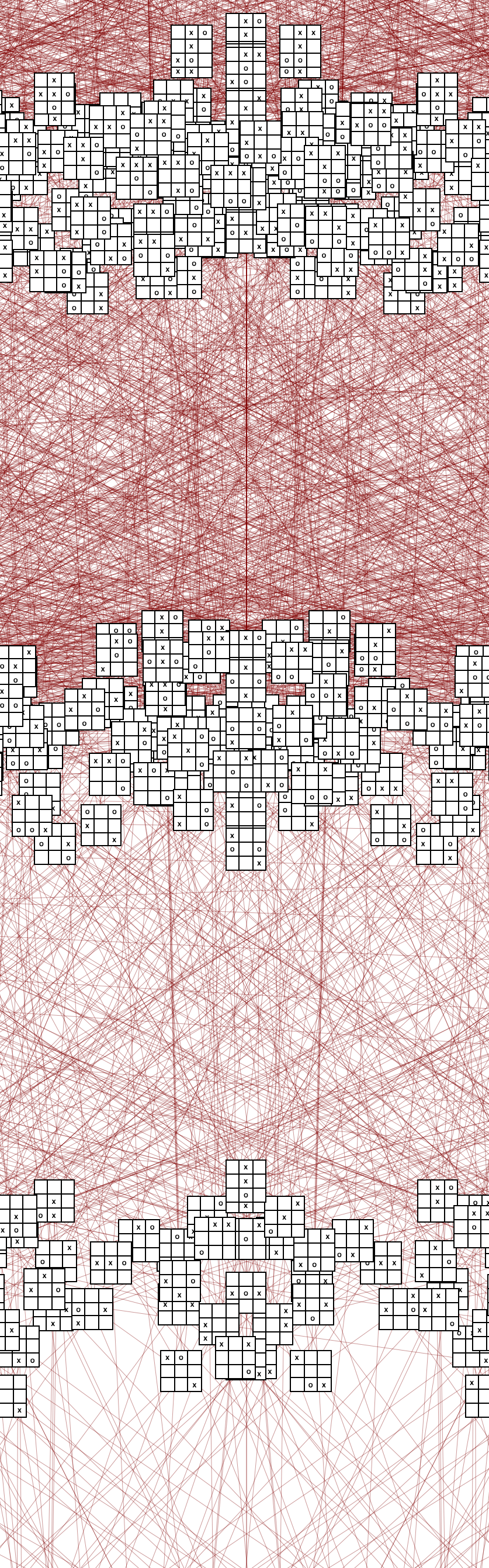

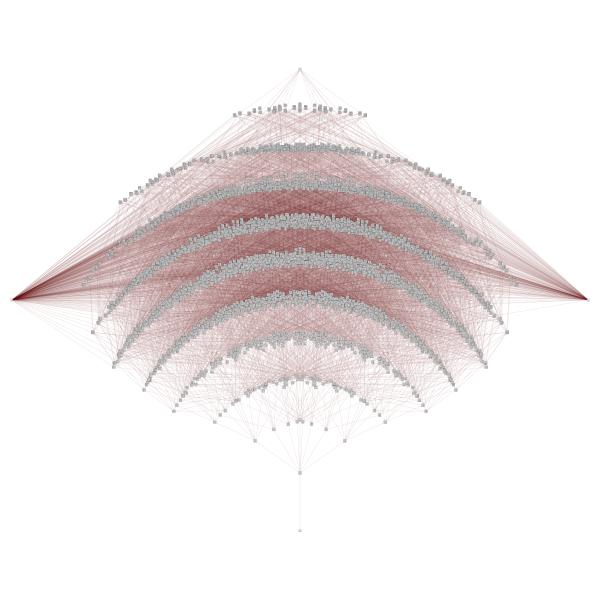

This is from my blogpost "All the Transitions of Tic Tac Toe, Redux" . Apparently the full-size image is no longer available (probably because it's close to 80 megabytes in size, and whatever file hosting I was using to put it up is broken) but a "smaller" version is below, only 12 megabytes in size (or here):

Funny ... I long remembered this as being the topic of "Don't Fall Into Rabbit Holes" but that turns out to have been a completely different project.

-the Centaur

In case it isn't clear, when I took on the Blogging Every Day project, I hoped to get at least one post a day for the full year. Today, the 19th of February, is allegedly the 50th day of the year (according to On This Day in Math, this is the smallest number that can be written as the sum of two squares in two different ways, 1+49 and 25+25 ... who knew? Pat Ballew, apparently). But by my count this is only the twenty-ninth blogpost in this series (not counting blog posts done for other reasons), so it's twenty nine, minus twenty-one behind what the goal should be for the day. And I need to be doing at least two of these a day to get back on track.

Just so we're clear.

-the Centaur

Pictured: What was behind my head when I was taking that picture of King's Fish House.

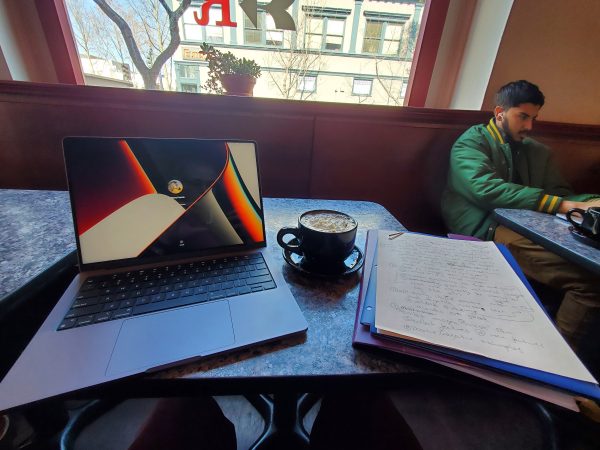

... I miss the glory days when you were open until 10 (and Bookbuyers was open to midnight, just up the street) but you're still a great place to grab a mocha, get together with techy friends, and work on a project.

What's amazing about the Bay Area is how much technology is just milling around in the ether. I practically tripped on a robot on the way to the meeting, some people at a nearby table were talking about self-driving cars, day before yesterday the people next to me were talking about robots, reinforcement learning and my colleagues, and I ran into three techy friends, one of whom introduced me to some more robot folk.

What a place to be, and what a time to be alive.

Blogging every day.

-the Centaur

Very tired and sleepy, so you get graffiti ... good night.

-the Centaur

Two tomahawks in all but bone for the high school gang's 30th annual "Edgemas" party, prepped with my own custom almost-dry rub and set aside to rest for 24 hours prior to a reverse-sear:

I hear it turned out pretty well. :-D

-the Centaur

It's a [re]start. Welcome to 2023, everyone.

-the Centaur

Well, it looks like the way WordPress is going to pull the Classic Editor out of my cold, dead hands is to screw up the formatting of any post published with the Classic Editor. The only way it seems to get posts to appear correctly in the blog roll is to use the new Gutenberg garbage. I will be updating posts a few at a time to try to overcome this. The problem is only "new" Classic Editor posts ... the older content in the blog doesn't appear to be affected, so hopefully it won't take too long if I update a few a day.

-the Centaur