"Discretion" is sometimes defined as "the freedom to decide," as in "a judge exercising their discretion" or the related sense of "speaking with care," as in "a confidant's discretion can be relied upon." These are closely related, in my mind, to "discernment", the ability to judge well, a word which has been co-opted in Christian circles to refer to examining things without immediate judgment to obtain spiritual guidance.

But when I mean discretion, I mean taking each situation case by case and applying one's best judgment without relying on pre-decided rules, as a method for dealing with the inevitable limitations placed on us by Godel's Incompleteness Theorem - or, in plain English, exercising your judgment because rules will fail you.

A theorem is something that's always true whether we want it to be true or not. "Two plus two is four", believe it or not, is a theorem, communicating the idea that A(S(S(0)),S(S(0))) - in English, "plus two two" - is S(S(S(S(0)))) - in English, "four" - because of the definition of A(,) - in English "plus". There are times when the theorem isn't appropriate - for example, trying to "add" merging clouds - but you cannot escape it.

The fancy-sounding concept "Godel's Incompleteness Theorem" is a theorem, and in English it means that rules will always fail you by being wrong or incomplete. Its formal statement is about the "incompleteness" of any system complex enough to do arithmetic, and its unprovable consistency. The mathy version of it runs a dozen pages, but shelves upon shelves of textbooks have been written on its implications.

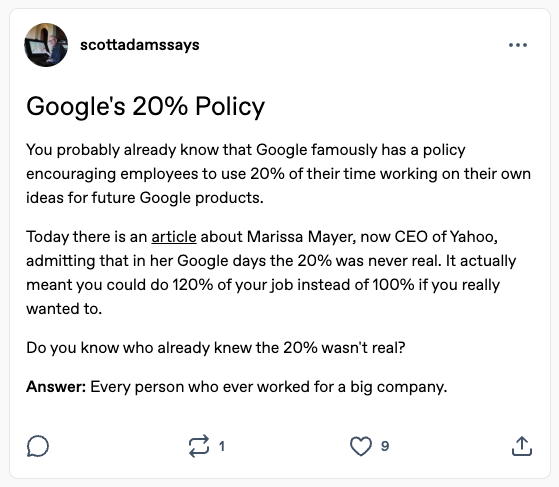

But in practical terms it means that no matter how complex the set of rules you create, either that system must inevitably fail to cover some case, or it must contain mistakes, or it must be so trivial as to be useless. Which means that no one - no priest nor politician nor administrator nor ordinary people trying to manage their own lives - can come up with a set of rules that will always work.

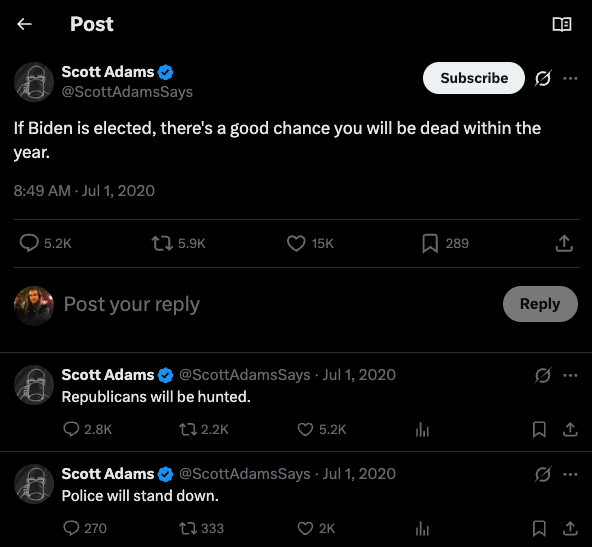

That means we must always exercise our discretion. This is a dangerous thing. Christian theologians love to argue that people love to rationalize, to come up with explanations that justify their misbehavior; but this does not prevent the rules those theologians come up with from failing.

I myself am fond of saying that in a world with imperfect information, decisions cannot be made reliably based on the information that we have in front of us, and that we have to rely on policies that extend beyond those immediate situations; but even those policies may inevitably fail.

But the possibility of failure does not absolve us from the responsibility of trying. To do the best we can in the world, we need to think back - and think ahead - and come up with the best rules that we can, so we don't get fooled by our own desires or the appearance of the situation in the moment; but in the moment, we must also apply our discretion, keeping a careful eye out for conditions that undermine the assumptions behind our clever rules and force us back to the drawing board for a new look.

This process of exercising discretion is fundamentally human. I don't mean the emotional statement "oh, this is a basic part of the human experience" - though it is that - but actually a more technical statement of how human cognition works: it's a part of how we think called universal subgoaling and chunking.

Normally when we think we're actually deploying many learned rules extremely swiftly to make progress, an experience of flow that we find effortless. But when the cognitive engines we call our "minds" reach an "impasse" where we don't know how to move forward on our goals, we generate new "subgoals" to resolve those impasses, marshalling all the knowledge we have to try to solve the problem. It's a difficult, effortful process, prone to failure; but if we do succeed, our brains store this solution as a new "chunk", a new if-then rule which we can use to think more swiftly and effectively in the future.

[As an aside, one of the actual differences between modern "AI" and human thought --- or, more properly, between modern LLMs and so-called "cognitive architectures" modeled on actual human thinking --- is that the LLMs are explicitly not set up to do this. Their learning process is much more akin to acquiring a lot of crystallized rules, or to manipulating those rules in a limited workplace in something akin to subgoaling, but they generally are not set up to do chunking. In a way, we don't want them to; we don't want chunks from my chat session leaking into your session, giving you my answers. But diving into how almost every critique you've ever heard of modern "AI" is a load of dingo's kidneys would be too much of a digression.]

In a sense, we as people and systems are often not as smart as our own brains trying to solve problems, relying too much on fixed rules, societal norms, past traditions, and unjustified feelings than our own brains, which have the advantage of being able to immediately tell whether their if-then rules are failing to give them the answers we need (whether those are the right answer is another question). It takes a deliberate effort to make sure we're not running on autopilot, and all too often, we stick to the rules for no reason.

Don't do that. Look at the situation; exercise your discretion.

You, and the world, will be better off if you do.

-the Centaur

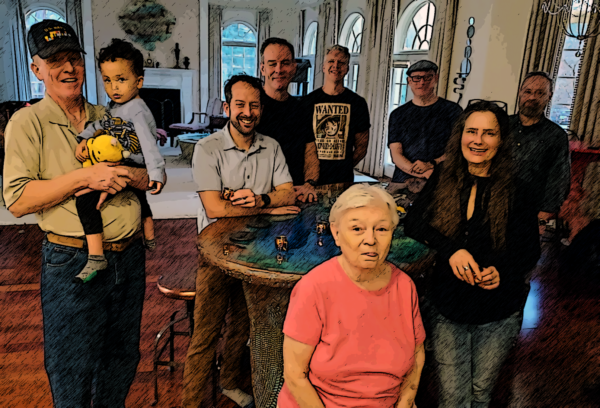

Pictured: Discretion is the better part of valor when spending a vacation with my wife in a town with a lot of good vegan food options. After several days of overeating ... I had a salad for dinner tonight at Craft Roots, because I knew my wife was going to order chocolate mousse with ice cream for dessert.