Another key concept that I think is critically important for science and life is "getting traction." A lot of things we do as humans simply don't get us anywhere - for example, most work in philosophy. That may sound like I'm being snarky, and maybe I am, but it's a common trope that we've been discussing things like free will, the nature of time, and Zeno's paradox for thousands of years with no real resolution.

But the problem is that, contra Immanuel Kant, philosophy cannot be reduced to an enterprise that tries to answer "What can I know?" "What should I do?", "What can I hope?" and "What is a human being?" - though those questions are critically important to philosophy. Similarly, contra Ayn Rand, philosophy cannot be reduced to "Where am I?" (metaphysics), "How do I know?" (epistemology), and "What should I do?" (ethics) - though these disciplines are critically important to philosophy.

No, philosophy's job is to map the options of thought. Perennial questions like free will remain perennial because there are many ways to think about the problem and a responsible philosopher won't just attempt to "solve" it, they'll outline the different ways that we can think about it (as Daniel Dennett tried to do in Elbow Room: The Varieties of Free Will Worth Having). Like Saint Thomas Aquinas, I believe that you have free will whether you want it or not - though my argument is based on the Halting Problem - but even Aquinas admits that if your definition of free will excludes the possibility of a mechanism by which the will works, then he can't help you. So even if we reached a definitive answer to the question of free will eight hundred years ago, modern treatments cannot resist revisiting the entirety of the argument.

Leaving us feeling like we're getting nowhere.

To make progress, we need some way of moving on - some way of selecting an idea as the right one. And that can't happen from within philosophy itself - not just because I argue that "solving" isn't it's job, but because of a deeper problem that Ayn Rand calls the Primacy of Consciousness Fallacy - the idea that ideas are more important than reality. The way we think about problems does not change what is. For example, the Ship of Theseus is a famous "thought experiment in identity metaphysics" (according to Vision in the Marvel Universe) about a boat whose timbers are replaced one by one until nothing of the originals remain, raising question: is it the same boat or not? There are strong reasons to say that is, and that it isn't - but those are just options for thinking about it. It doesn't change the actual physical nature of the boat.

To get anywhere with these questions, we need to get evidence. To take a hypothetical example, if we were in a horror movie, and the fully-gutted Ship of Theseus started chasing people down to reclaim its lost timbers, we might start to suspect that it was, indeed, the same ship. Conversely, if we were in a science fiction movie, and no-one who went through a transporter ever remembered who they were, we might start to suspect that their identity was not preserved, and that a matter-energy scrambler was not a good way to transport people from point A to B no matter how much money it saved on the show's budget.

But these are hypotheticals. To really get anywhere with a real question - to get traction in the space of ideas that moves us from a set of options on to a definitive answer - you need more than an argument that convinces yourself; you to start looking for ways to get evidence that distinguishes between the options, evidence that can be shared with other people, or replicated by them, to help them make the same move.

You can see this clearly when looking at the philosophy of general relativity, which explores staggeringly speculative concepts like thunderbolts (fractures in spacetime that spread at the speed of light) and supertasks (performing infinite tasks like computing the digits of pi in one part of spacetime and reading them off in another, dilated part of spacetime, hoping to find that elusive last digit). These questions involve scenarios we can't set up and tasks we cannot perform, and it's difficult to see how they could be resolved.

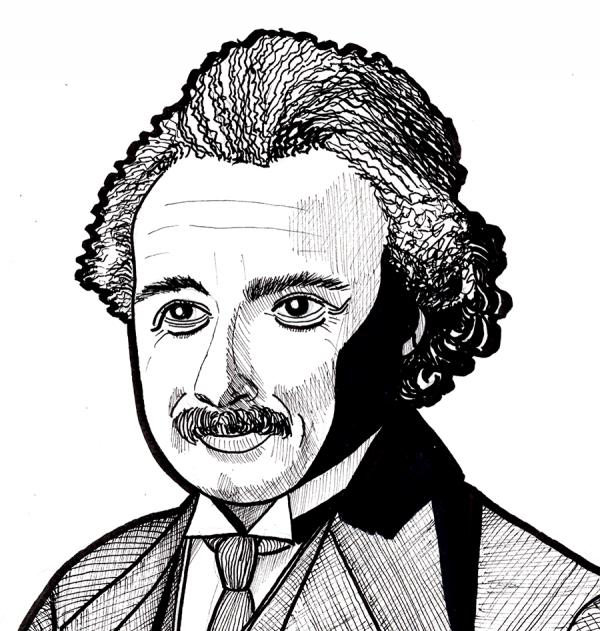

But these mental explorations help us understand what directions to take in our scientific explorations. The philosopher Mach wondered whether a rotating object in an empty universe could really be said to spin. It's a challenge to set up an entire universe just to answer a hypothetical - but Mach's exploration of the problem helped Einstein formulate his theory of general relativity, which in turn had consequences that were tested the scientist Eddington in a famous expedition. Eddington traveled to photograph a solar eclipse, which showed that starlight around the sun was bent the way Einstein predicted - in turn, giving us a probable answer to Mach's question that, yes, the object would rotate with respect to itself.

Getting traction is an important part of not just science but our everyday lives. I always get suspicious when I go to the doctor and they purport to make a diagnosis without running tests to verify whether they're right. Once, when my arm was broken and the bone plate was slow to heal, I went to a parade of doctors who failed to resolve the problem over a 2 year period. Doctors at the SOAR group ordered a CAT scan, identified a gap in the bone, and scheduled an exploratory surgery, during which they found a suture left from the original surgery that had caused a bulge in the bone and the appearance of a gap. My arm was fine, and likely had been fine for 2 years - but the other doctors didn't find this out because they didn't run the test.

The necessity of getting traction is why, in programming, I hate nondeterministic builds (where sometimes it works and sometimes it doesn't) and hate debugging heisenbugs (where sometimes it fails and sometimes it doesnt). Stochastic failures - failures which happen randomly - lead you to trying things over and over again, hoping to get different results. Doing something again and expecting different results may not be the definition of insanity, and Einstein certainly didn't say it, but it's not great, and it trains you to flail.

Once I encountered this as a real debugging issue - resolving a problem with a robotic device driver for a lidar sensor (a laser radar, used to tell how close objects were to the robot). I was frustrated and thrashing with non-repeatable bugs in my program, and eventually cracked out the manufacturer's diagnostic program to see if I had a bad sensor. But the manufacturer's diagnostic also had the same problems, on more than one lidar unit, and I realized that correctly working sensors of that make and model were actually unreliable when connected to the computer we were using!

So how did I get traction when I literally couldn't trust the data coming from the sensor?

With a spreadsheet.

For each variant of the program that I tried - the original, and various fixes - I ran the program ten times, counted the successes and failures, and entered them into my spreadsheet. It very quickly became apparent that the original program almost never, whereas the best of my fixes worked seventy percent of the time. Since our experimental robots frequently needed to be rebooted multiple times on startup to fix other race conditions, we had no problem shipping "seventy percent success" as an improvement over ten percent.

Getting traction is a key part of science, engineering, and life. We can even apply it to philosophy, if we ask ourselves whether there are actual facts that help us choose between the options, or whether there are values that we hold that lead us to prefer one option over the other. In fact, many of the best philosophers produced their greatest work by taking definitive stands on one or more philosophical questions and then pursuing the implications rigorously. Some would even argue that modern physics is a kind of natural philosophy which took the stance of materialism to its logical conclusion - and then started producing fantastic empirical results by building on that stance.

So what problems in your life could you improve on if you found a way to push off from where you are?

-the Centaur

Pictured: We're fixing our roof, so we have to protect our floor. This floorpaper is actually to help our interior repair team move equipment without damaging our hardwoods, and does not have anything to do with traction, regardless of whether it looks like it's something used for that purpose.

Yeah, so that happened on my attempt to get some rest on my Sabbath day.

I'm not going to cite the book - I'm going to do the author the courtesy of re-reading the relevant passages to make sure I'm not misconstruing them, but I'm not going to wait to blog my reaction - but what caused me to throw this book, an analysis of the flaws of the scientific method, was this bit:

Imagine an experiment with two possible outcomes: the new theory (cough EINSTEIN) and the old one (cough NEWTON). Three instruments are set up. Two report numbers consistent with the new theory; the third one, missing parts, possibly configured improperly and producing noisy data, matches the old.

Wow! News flash: any responsible working scientist would say these results favored the new theory. In fact, if they were really experienced, they might have even thrown out the third instrument entirely - I've learned, based on red herrings from bad readings, that it's better not to look too closely at bad data.

What did the author say, however? Words to the effect: "The scientists ignored the results from the third instrument which disproved their theory and supported the original, and instead, pushing their agenda, wrote a paper claiming that the results of the experiment supported their idea."

Pushing an agenda? Wait, let me get this straight, Chester Chucklewhaite: we should throw out two results from well-functioning instruments that support theory A in favor of one result from an obviously messed-up instrument that support theory B - oh, hell, you're a relativity doubter, aren't you?

Chuck-toss.

I'll go back to this later, after I've read a few more sections of E. T. Jaynes's Probability Theory: The Logic of Science as an antidote.

-the Centaur

P. S. I am not saying relativity is right or wrong, friend. I'm saying the responsible interpretation of those experimental results as described would be precisely the interpretation those scientists put forward - though, in all fairness to the author of this book, the scientist involved appears to have been a super jerk.

Yeah, so that happened on my attempt to get some rest on my Sabbath day.

I'm not going to cite the book - I'm going to do the author the courtesy of re-reading the relevant passages to make sure I'm not misconstruing them, but I'm not going to wait to blog my reaction - but what caused me to throw this book, an analysis of the flaws of the scientific method, was this bit:

Imagine an experiment with two possible outcomes: the new theory (cough EINSTEIN) and the old one (cough NEWTON). Three instruments are set up. Two report numbers consistent with the new theory; the third one, missing parts, possibly configured improperly and producing noisy data, matches the old.

Wow! News flash: any responsible working scientist would say these results favored the new theory. In fact, if they were really experienced, they might have even thrown out the third instrument entirely - I've learned, based on red herrings from bad readings, that it's better not to look too closely at bad data.

What did the author say, however? Words to the effect: "The scientists ignored the results from the third instrument which disproved their theory and supported the original, and instead, pushing their agenda, wrote a paper claiming that the results of the experiment supported their idea."

Pushing an agenda? Wait, let me get this straight, Chester Chucklewhaite: we should throw out two results from well-functioning instruments that support theory A in favor of one result from an obviously messed-up instrument that support theory B - oh, hell, you're a relativity doubter, aren't you?

Chuck-toss.

I'll go back to this later, after I've read a few more sections of E. T. Jaynes's Probability Theory: The Logic of Science as an antidote.

-the Centaur

P. S. I am not saying relativity is right or wrong, friend. I'm saying the responsible interpretation of those experimental results as described would be precisely the interpretation those scientists put forward - though, in all fairness to the author of this book, the scientist involved appears to have been a super jerk.

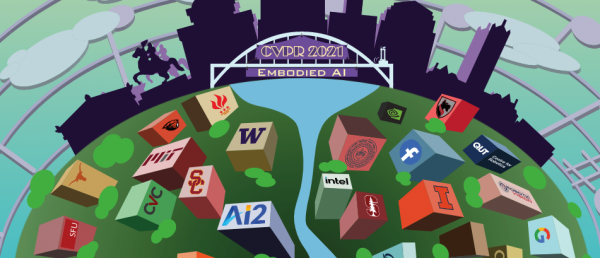

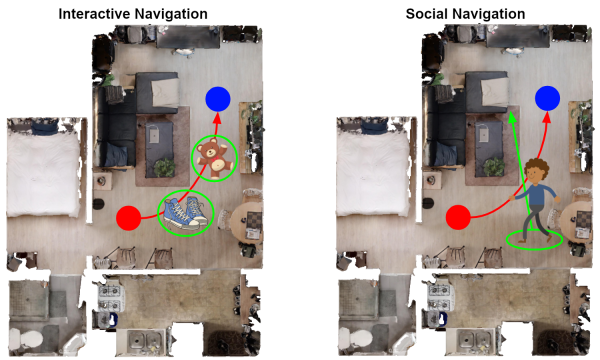

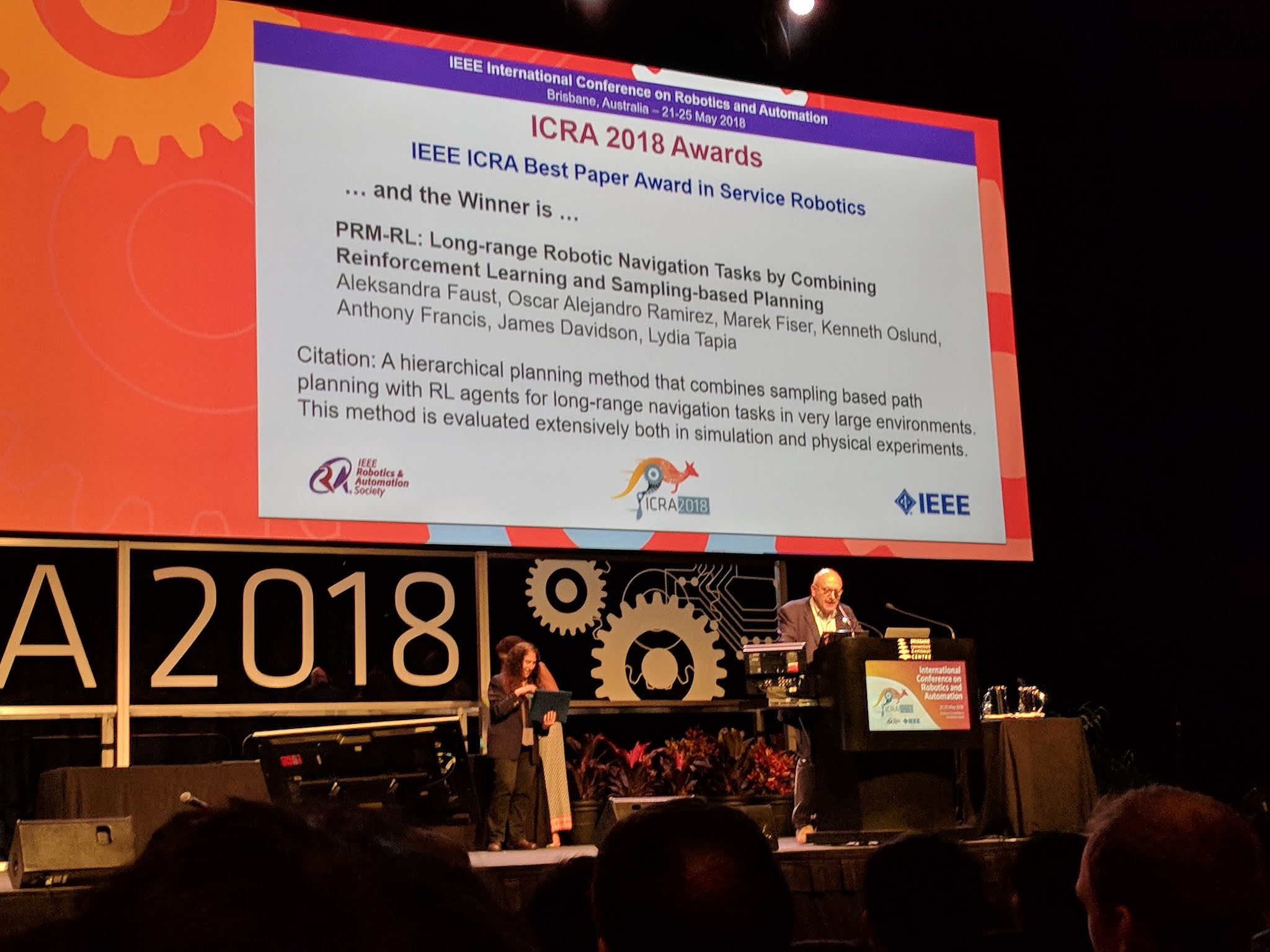

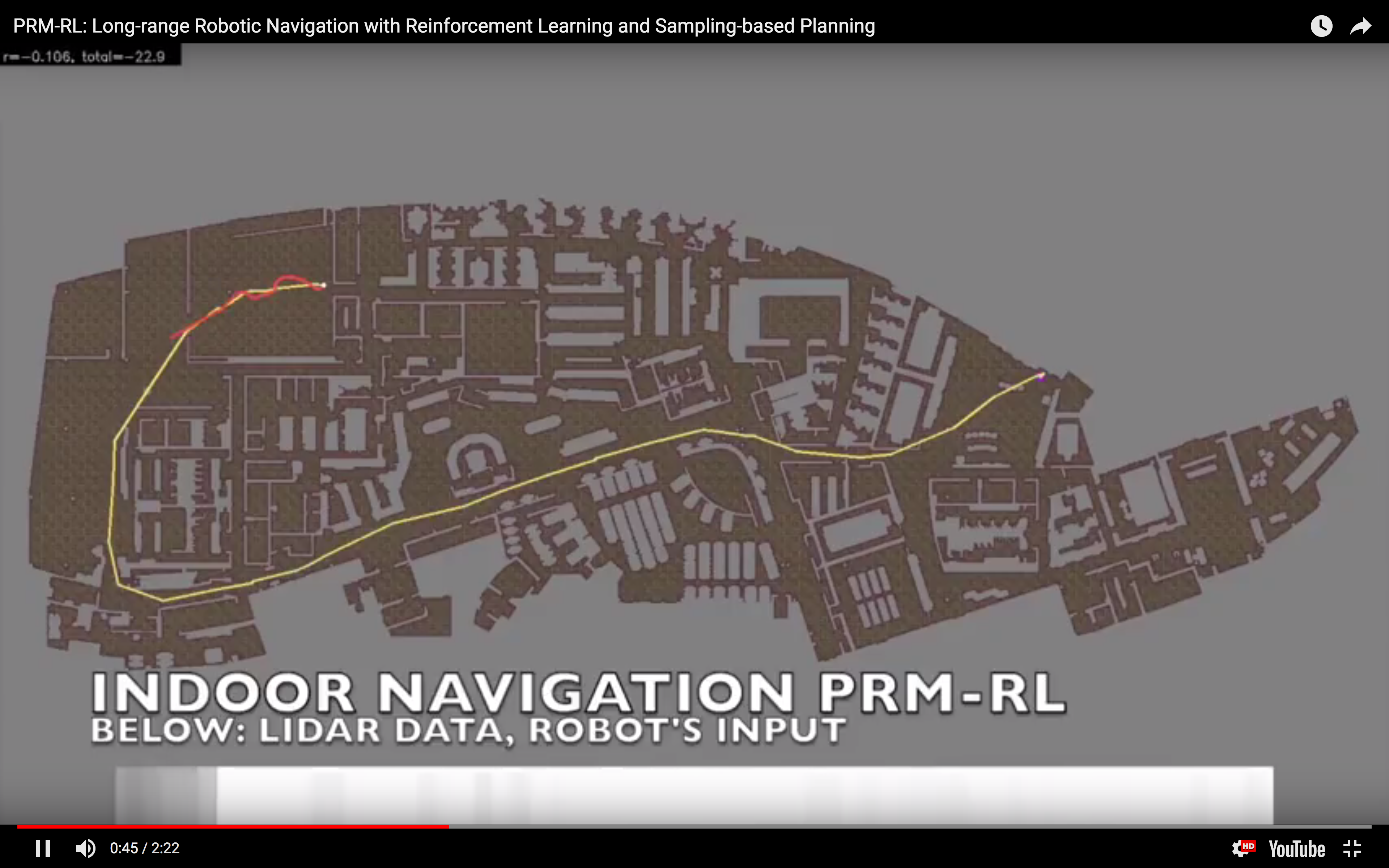

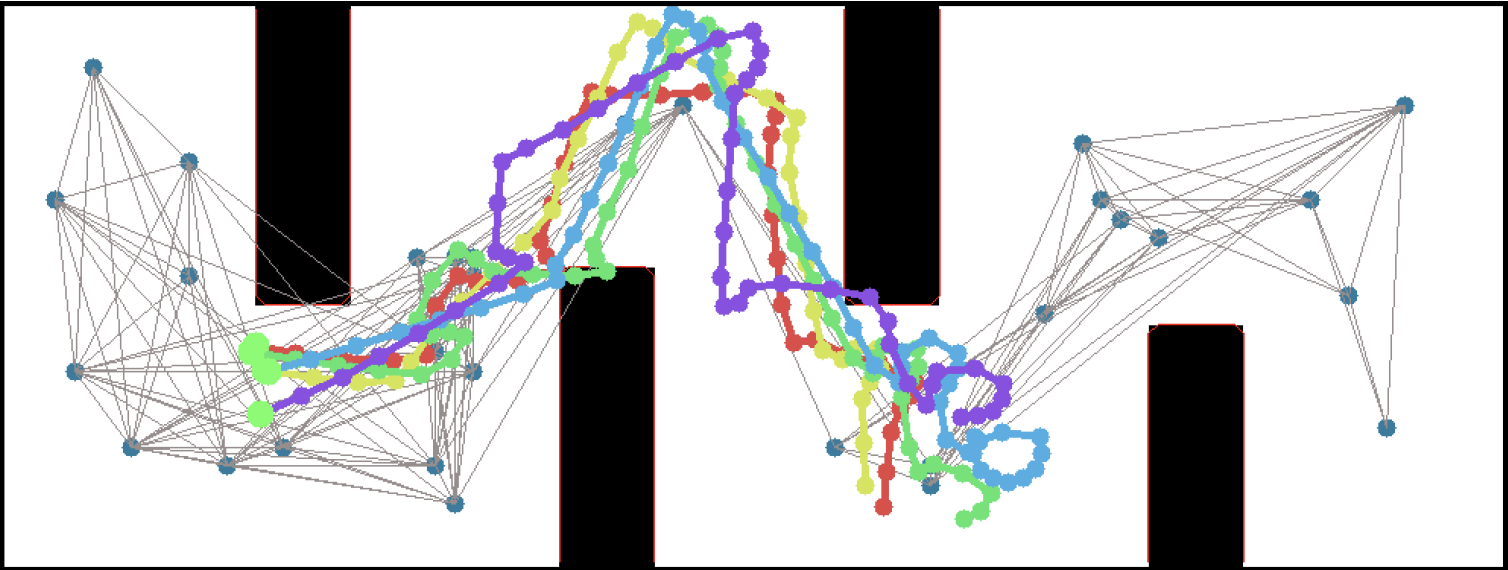

So, this happened! Our team's paper on "PRM-RL" - a way to teach robots to navigate their worlds which combines human-designed algorithms that use roadmaps with deep-learned algorithms to control the robot itself - won a best paper award at the ICRA robotics conference!

So, this happened! Our team's paper on "PRM-RL" - a way to teach robots to navigate their worlds which combines human-designed algorithms that use roadmaps with deep-learned algorithms to control the robot itself - won a best paper award at the ICRA robotics conference!

I talked a little bit about how PRM-RL works in the post "

I talked a little bit about how PRM-RL works in the post " We were cited not just for this technique, but for testing it extensively in simulation and on two different kinds of robots. I want to thank everyone on the team - especially Sandra Faust for her background in PRMs and for taking point on the idea (and doing all the quadrotor work with Lydia Tapia), for Oscar Ramirez and Marek Fiser for their work on our reinforcement learning framework and simulator, for Kenneth Oslund for his heroic last-minute push to collect the indoor robot navigation data, and to our manager James for his guidance, contributions to the paper and support of our navigation work.

We were cited not just for this technique, but for testing it extensively in simulation and on two different kinds of robots. I want to thank everyone on the team - especially Sandra Faust for her background in PRMs and for taking point on the idea (and doing all the quadrotor work with Lydia Tapia), for Oscar Ramirez and Marek Fiser for their work on our reinforcement learning framework and simulator, for Kenneth Oslund for his heroic last-minute push to collect the indoor robot navigation data, and to our manager James for his guidance, contributions to the paper and support of our navigation work.

Woohoo! Thanks again everyone!

-the Centaur

Woohoo! Thanks again everyone!

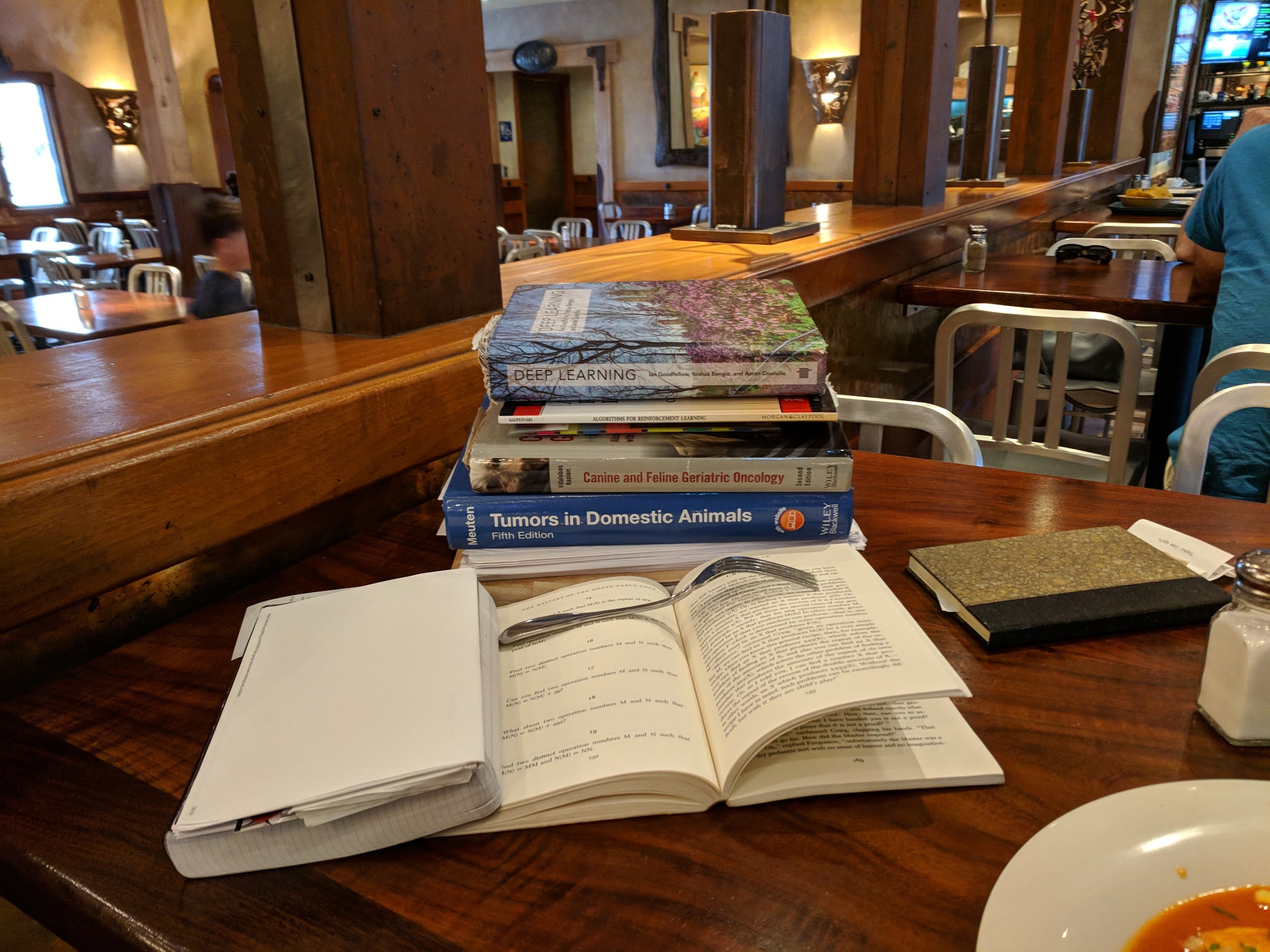

-the Centaur  When I was a kid (well, a teenager) I'd read puzzle books for pure enjoyment. I'd gotten started with Martin Gardner's mathematical recreation books, but the ones I really liked were Raymond Smullyan's books of logic puzzles. I'd go to Wendy's on my lunch break at Francis Produce, with a little notepad and a book, and chew my way through a few puzzles. I'll admit I often skipped ahead if they got too hard, but I did my best most of the time.

I read more of these as an adult, moving back to the Martin Gardner books. But sometime, about twenty-five years ago (when I was in the thick of grad school) my reading needs completely overwhelmed my reading ability. I'd always carried huge stacks of books home from the library, never finishing all of them, frequently paying late fees, but there was one book in particular - The Emotions by Nico Frijda - which I finished but never followed up on.

Over the intervening years, I did finish books, but read most of them scattershot, picking up what I needed for my creative writing or scientific research. Eventually I started using the tiny little notetabs you see in some books to mark the stuff that I'd written, a "levels of processing" trick to ensure that I was mindfully reading what I wrote.

A few years ago, I admitted that wasn't enough, and consciously began trying to read ahead of what I needed to for work. I chewed through C++ manuals and planning books and was always rewarded a few months later when I'd already read what I needed to to solve my problems. I began focusing on fewer books in depth, finishing more books than I had in years.

Even that wasn't enough, and I began - at last - the re-reading project I'd hoped to do with The Emotions. Recently I did that with Dedekind's Essays on the Theory of Numbers, but now I'm doing it with the Deep Learning. But some of that math is frickin' beyond where I am now, man. Maybe one day I'll get it, but sometimes I've spent weeks tackling a problem I just couldn't get.

Enter puzzles. As it turns out, it's really useful for a scientist to also be a science fiction writer who writes stories about a teenaged mathematical genius! I've had to simulate Cinnamon Frost's staggering intellect for the purpose of writing the Dakota Frost stories, but the further I go, the more I want her to be doing real math. How did I get into math? Puzzles!

So I gave her puzzles. And I decided to return to my old puzzle books, some of the ones I got later but never fully finished, and to give them the deep reading treatment. It's going much slower than I like - I find myself falling victim to the "rule of threes" (you can do a third of what you want to do, often in three times as much time as you expect) - but then I noticed something interesting.

Some of Smullyan's books in particular are thinly disguised math books. In some parts, they're even the same math I have to tackle in my own work. But unlike the other books, these problems are designed to be solved, rather than a reflection of some chunk of reality which may be stubborn; and unlike the other books, these have solutions along with each problem.

So, I've been solving puzzles ... with careful note of how I have been failing to solve puzzles. I've hinted at this before, but understanding how you, personally, usually fail is a powerful technique for debugging your own stuck points. I get sloppy, I drop terms from equations, I misunderstand conditions, I overcomplicate solutions, I grind against problems where I should ask for help, I rabbithole on analytical exploration, and I always underestimate the time it will take for me to make the most basic progress.

Know your weaknesses. Then you can work those weak mental muscles, or work around them to build complementary strengths - the way Richard Feynman would always check over an equation when he was done, looking for those places where he had flipped a sign.

Back to work!

-the Centaur

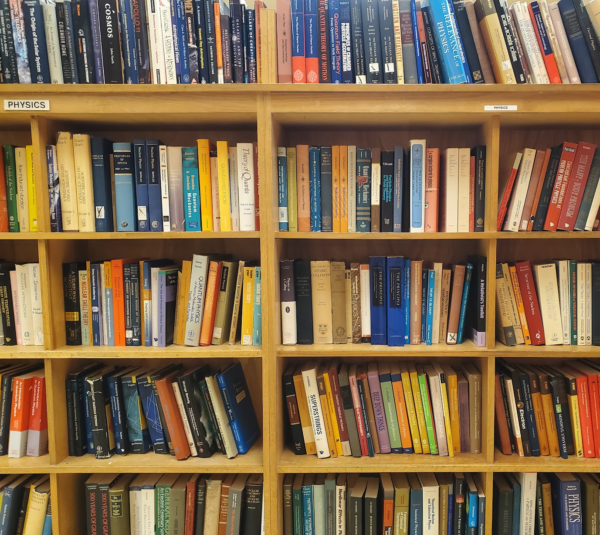

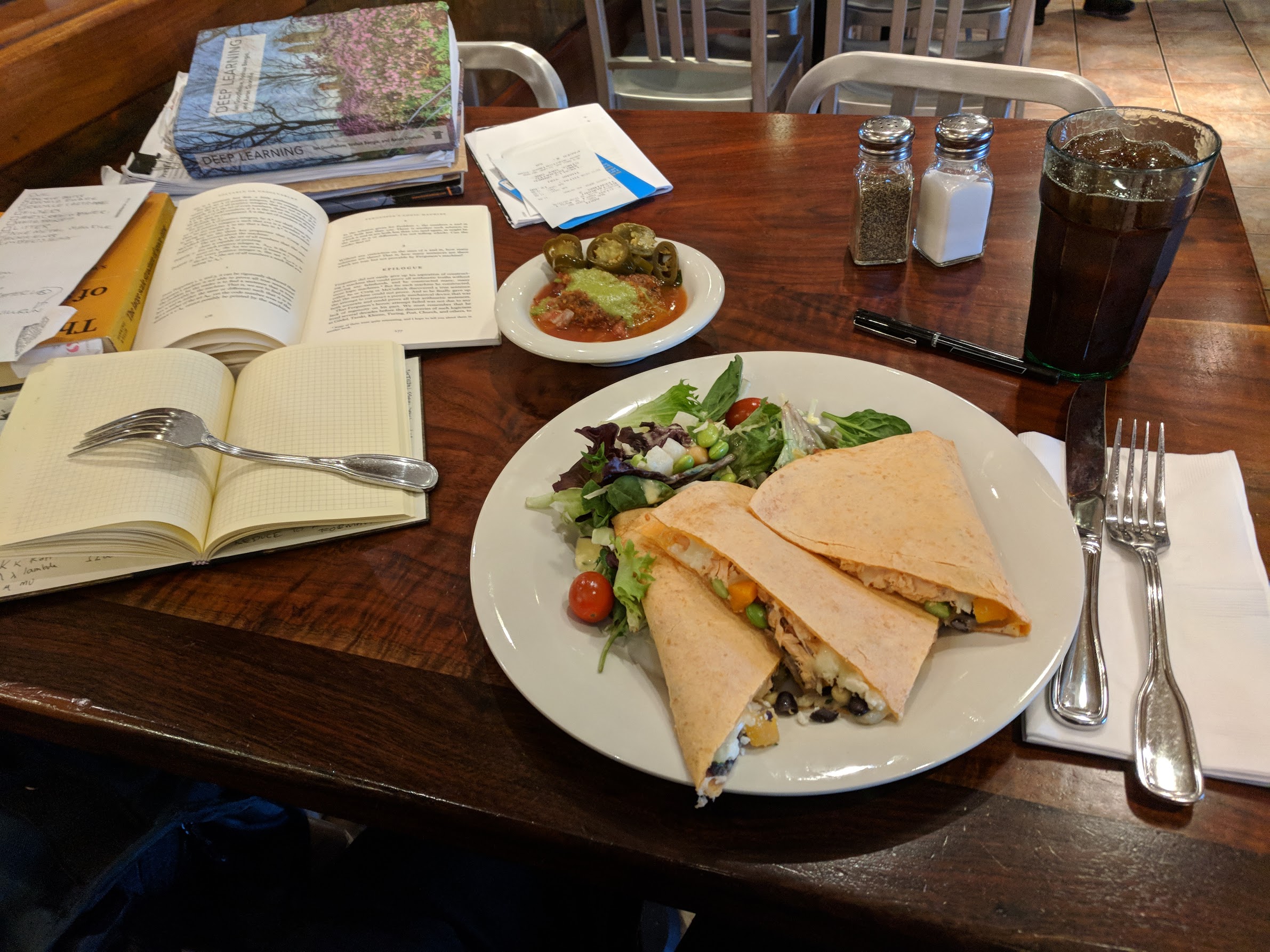

Pictured: my "stack" at a typical lunch. I'll usually get to one out of three of the things I bring for myself to do. Never can predict which one though.

When I was a kid (well, a teenager) I'd read puzzle books for pure enjoyment. I'd gotten started with Martin Gardner's mathematical recreation books, but the ones I really liked were Raymond Smullyan's books of logic puzzles. I'd go to Wendy's on my lunch break at Francis Produce, with a little notepad and a book, and chew my way through a few puzzles. I'll admit I often skipped ahead if they got too hard, but I did my best most of the time.

I read more of these as an adult, moving back to the Martin Gardner books. But sometime, about twenty-five years ago (when I was in the thick of grad school) my reading needs completely overwhelmed my reading ability. I'd always carried huge stacks of books home from the library, never finishing all of them, frequently paying late fees, but there was one book in particular - The Emotions by Nico Frijda - which I finished but never followed up on.

Over the intervening years, I did finish books, but read most of them scattershot, picking up what I needed for my creative writing or scientific research. Eventually I started using the tiny little notetabs you see in some books to mark the stuff that I'd written, a "levels of processing" trick to ensure that I was mindfully reading what I wrote.

A few years ago, I admitted that wasn't enough, and consciously began trying to read ahead of what I needed to for work. I chewed through C++ manuals and planning books and was always rewarded a few months later when I'd already read what I needed to to solve my problems. I began focusing on fewer books in depth, finishing more books than I had in years.

Even that wasn't enough, and I began - at last - the re-reading project I'd hoped to do with The Emotions. Recently I did that with Dedekind's Essays on the Theory of Numbers, but now I'm doing it with the Deep Learning. But some of that math is frickin' beyond where I am now, man. Maybe one day I'll get it, but sometimes I've spent weeks tackling a problem I just couldn't get.

Enter puzzles. As it turns out, it's really useful for a scientist to also be a science fiction writer who writes stories about a teenaged mathematical genius! I've had to simulate Cinnamon Frost's staggering intellect for the purpose of writing the Dakota Frost stories, but the further I go, the more I want her to be doing real math. How did I get into math? Puzzles!

So I gave her puzzles. And I decided to return to my old puzzle books, some of the ones I got later but never fully finished, and to give them the deep reading treatment. It's going much slower than I like - I find myself falling victim to the "rule of threes" (you can do a third of what you want to do, often in three times as much time as you expect) - but then I noticed something interesting.

Some of Smullyan's books in particular are thinly disguised math books. In some parts, they're even the same math I have to tackle in my own work. But unlike the other books, these problems are designed to be solved, rather than a reflection of some chunk of reality which may be stubborn; and unlike the other books, these have solutions along with each problem.

So, I've been solving puzzles ... with careful note of how I have been failing to solve puzzles. I've hinted at this before, but understanding how you, personally, usually fail is a powerful technique for debugging your own stuck points. I get sloppy, I drop terms from equations, I misunderstand conditions, I overcomplicate solutions, I grind against problems where I should ask for help, I rabbithole on analytical exploration, and I always underestimate the time it will take for me to make the most basic progress.

Know your weaknesses. Then you can work those weak mental muscles, or work around them to build complementary strengths - the way Richard Feynman would always check over an equation when he was done, looking for those places where he had flipped a sign.

Back to work!

-the Centaur

Pictured: my "stack" at a typical lunch. I'll usually get to one out of three of the things I bring for myself to do. Never can predict which one though.

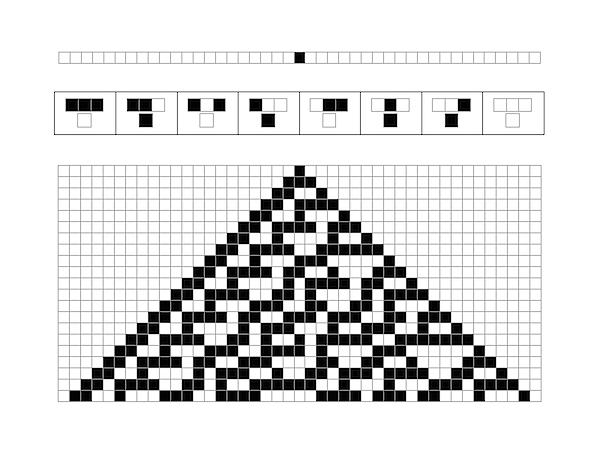

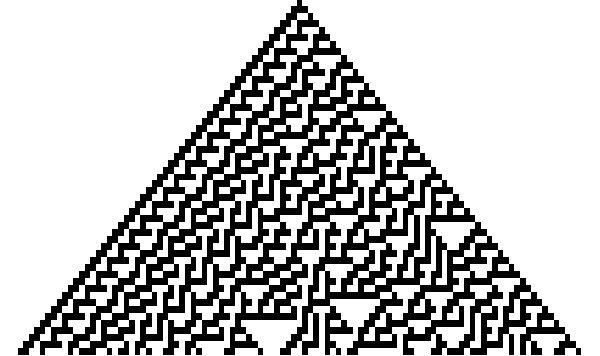

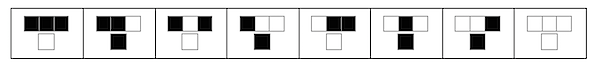

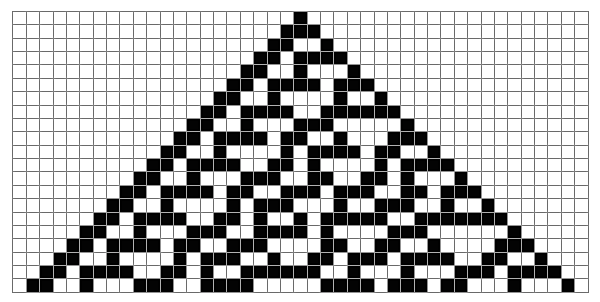

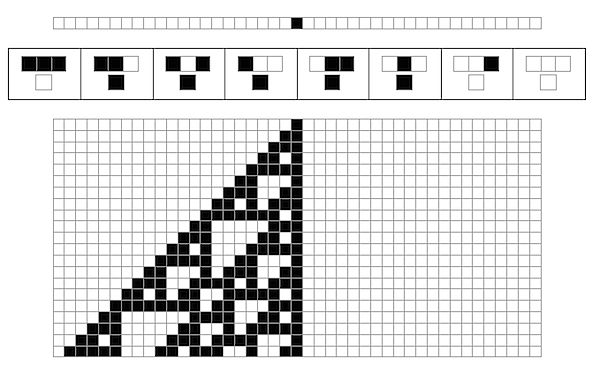

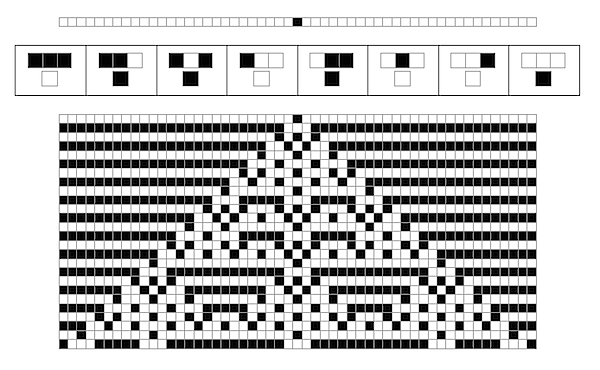

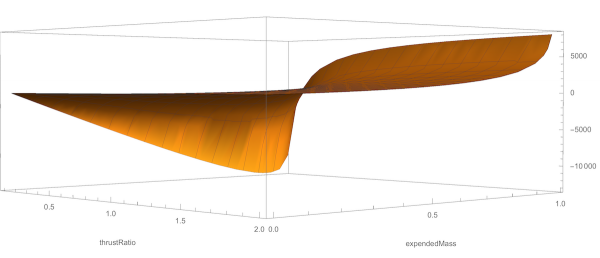

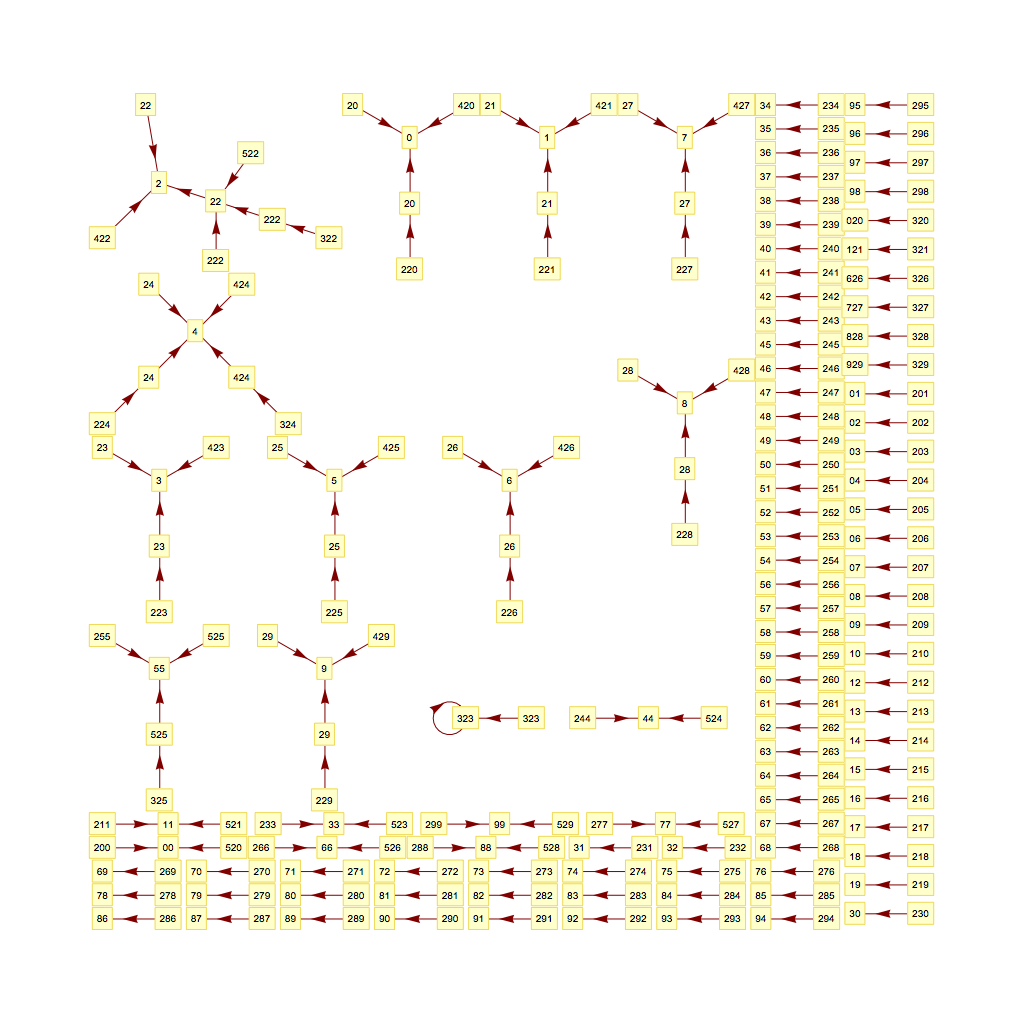

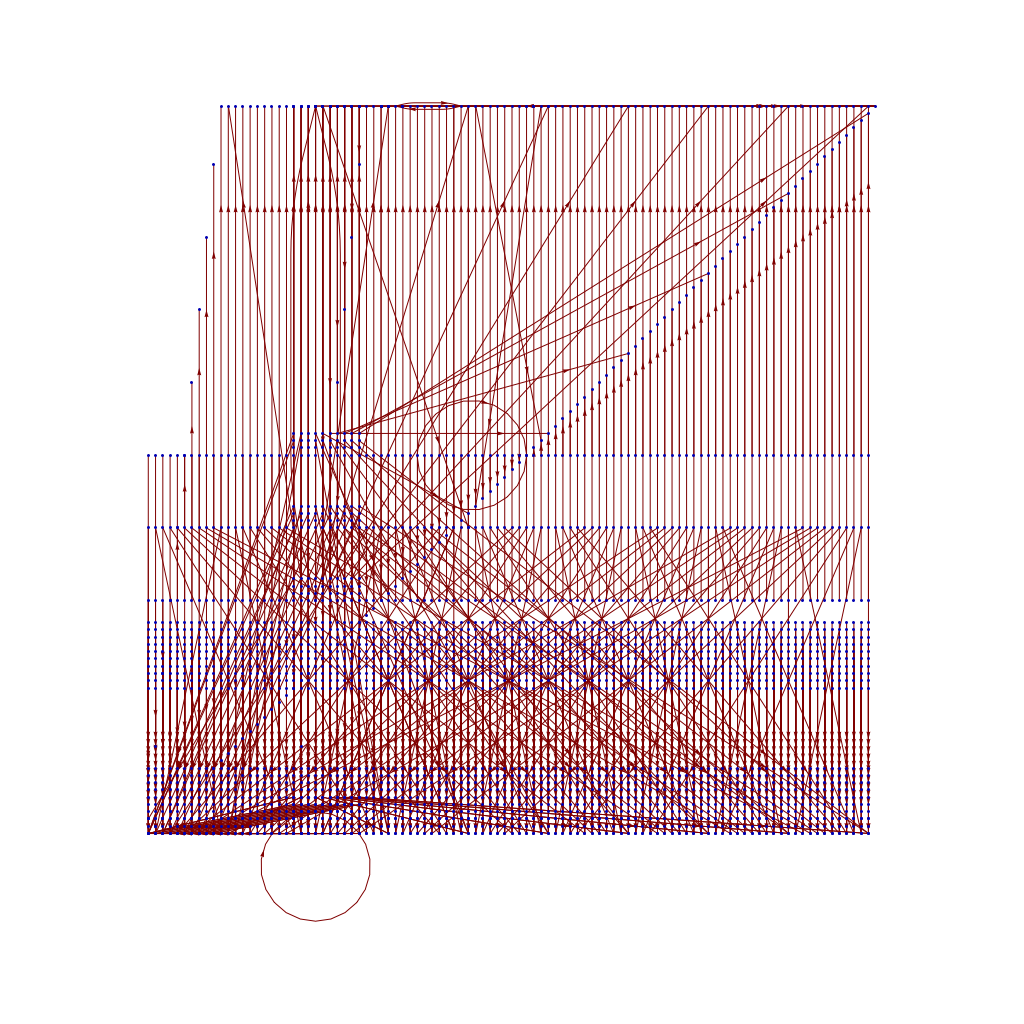

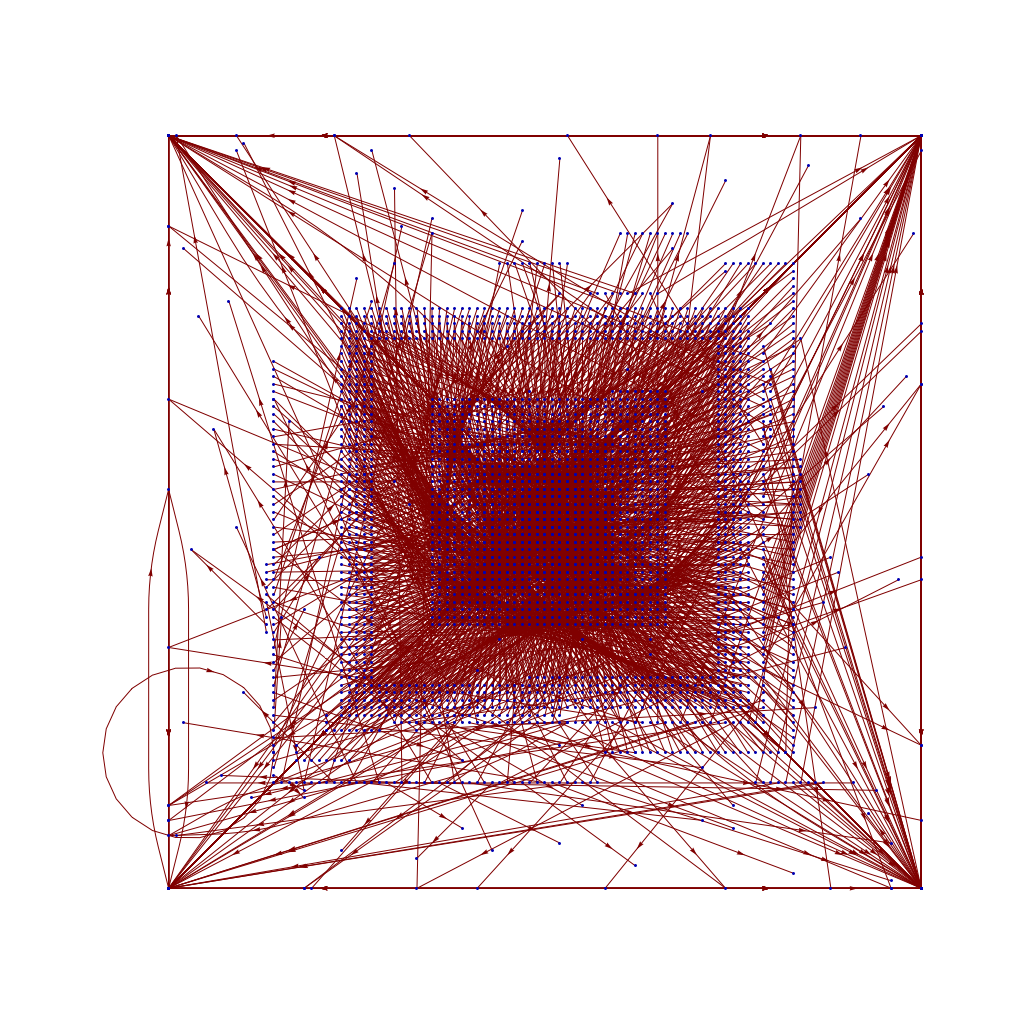

SO! There I was, trying to solve the mysteries of the universe, learn about deep learning, and teach myself enough puzzle logic to create credible puzzles for the Cinnamon Frost books, and I find myself debugging the fine details of a visualization system I've developed in Mathematica to analyze the distribution of problems in an odd middle chapter of Raymond Smullyan's

SO! There I was, trying to solve the mysteries of the universe, learn about deep learning, and teach myself enough puzzle logic to create credible puzzles for the Cinnamon Frost books, and I find myself debugging the fine details of a visualization system I've developed in Mathematica to analyze the distribution of problems in an odd middle chapter of Raymond Smullyan's  I meant well! Really I did. I was going to write a post about how finding a solution is just a little bit harder than you normally think, and how insight sometimes comes after letting things sit.

I meant well! Really I did. I was going to write a post about how finding a solution is just a little bit harder than you normally think, and how insight sometimes comes after letting things sit.

But the tools I was creating didn't do what I wanted, so I went deeper and deeper down the rabbit hole trying to visualize them.

But the tools I was creating didn't do what I wanted, so I went deeper and deeper down the rabbit hole trying to visualize them.

The short answer seems to be that there's no "there" there and that further pursuit of this sub-problem will take me further and further away from the real problem: writing great puzzles!

The short answer seems to be that there's no "there" there and that further pursuit of this sub-problem will take me further and further away from the real problem: writing great puzzles!

I learned a lot - about numbers, about how things could combinatorially explode, about

I learned a lot - about numbers, about how things could combinatorially explode, about  I often say "I teach robots to learn," but what does that mean, exactly? Well, now that one of the projects that I've worked on has been announced - and I mean, not just on

I often say "I teach robots to learn," but what does that mean, exactly? Well, now that one of the projects that I've worked on has been announced - and I mean, not just on  This work includes both our group working on office robot navigation - including Alexandra Faust, Oscar Ramirez, Marek Fiser, Kenneth Oslund, me, and James Davidson - and Alexandra's collaborator Lydia Tapia, with whom she worked on the aerial navigation also reported in the paper. Until the ICRA version comes out, you can find the preliminary version on arXiv:

This work includes both our group working on office robot navigation - including Alexandra Faust, Oscar Ramirez, Marek Fiser, Kenneth Oslund, me, and James Davidson - and Alexandra's collaborator Lydia Tapia, with whom she worked on the aerial navigation also reported in the paper. Until the ICRA version comes out, you can find the preliminary version on arXiv:

Wow. After nearly 21 years, my first published short story, “Sibling Rivalry”, is returning to print. Originally an experiment to try out an idea I wanted to use for a longer novel, ALGORITHMIC MURDER, I quickly found that I’d caught a live wire with “Sibling Rivalry”, which was my first sale to The Leading Edge magazine back in 1995.

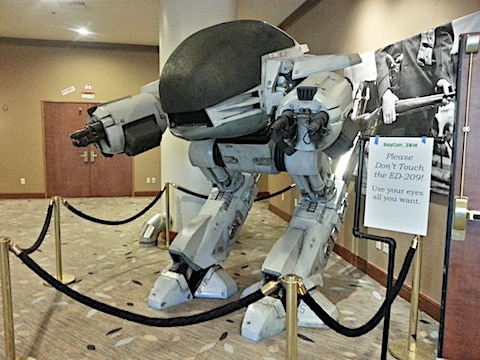

“Sibling Rivalry” was borne of frustrations I had as a graduate student in artificial intelligence (AI) watching shows like Star Trek which Captain Kirk talks a computer to death. No-one talks anyone to death outside of a Hannibal Lecter movie or a bad comic book, much less in real life, and there’s no reason to believe feeding a paradox to an AI will make it explode.

But there are ways to beat one, depending on how they’re constructed - and the more you know about them, the more potential routes there are for attack. That doesn’t mean you’ll win, of course, but … if you want to know, you’ll have to wait for the story to come out.

“Sibling Rivalry” will be the second book in Thinking Ink Press's Snapbook line, with another awesome cover by my wife Sandi Billingsley, interior design by Betsy Miller and comments by my friends Jim Davies and Kenny Moorman, the latter of whom uses “Sibling Rivalry” to teach AI in his college courses. Wow! I’m honored.

Our preview release will be at the

Wow. After nearly 21 years, my first published short story, “Sibling Rivalry”, is returning to print. Originally an experiment to try out an idea I wanted to use for a longer novel, ALGORITHMIC MURDER, I quickly found that I’d caught a live wire with “Sibling Rivalry”, which was my first sale to The Leading Edge magazine back in 1995.

“Sibling Rivalry” was borne of frustrations I had as a graduate student in artificial intelligence (AI) watching shows like Star Trek which Captain Kirk talks a computer to death. No-one talks anyone to death outside of a Hannibal Lecter movie or a bad comic book, much less in real life, and there’s no reason to believe feeding a paradox to an AI will make it explode.

But there are ways to beat one, depending on how they’re constructed - and the more you know about them, the more potential routes there are for attack. That doesn’t mean you’ll win, of course, but … if you want to know, you’ll have to wait for the story to come out.

“Sibling Rivalry” will be the second book in Thinking Ink Press's Snapbook line, with another awesome cover by my wife Sandi Billingsley, interior design by Betsy Miller and comments by my friends Jim Davies and Kenny Moorman, the latter of whom uses “Sibling Rivalry” to teach AI in his college courses. Wow! I’m honored.

Our preview release will be at the